The Battle Against Machine-Generated Text: Challenges and Solutions

The Challenge of Machine-Generated Text

Machine-generated text has been fooling humans for the last four years. Since the release of GPT-2 in 2019, large language model (LLM) tools have improved immensely at producing realistic content.

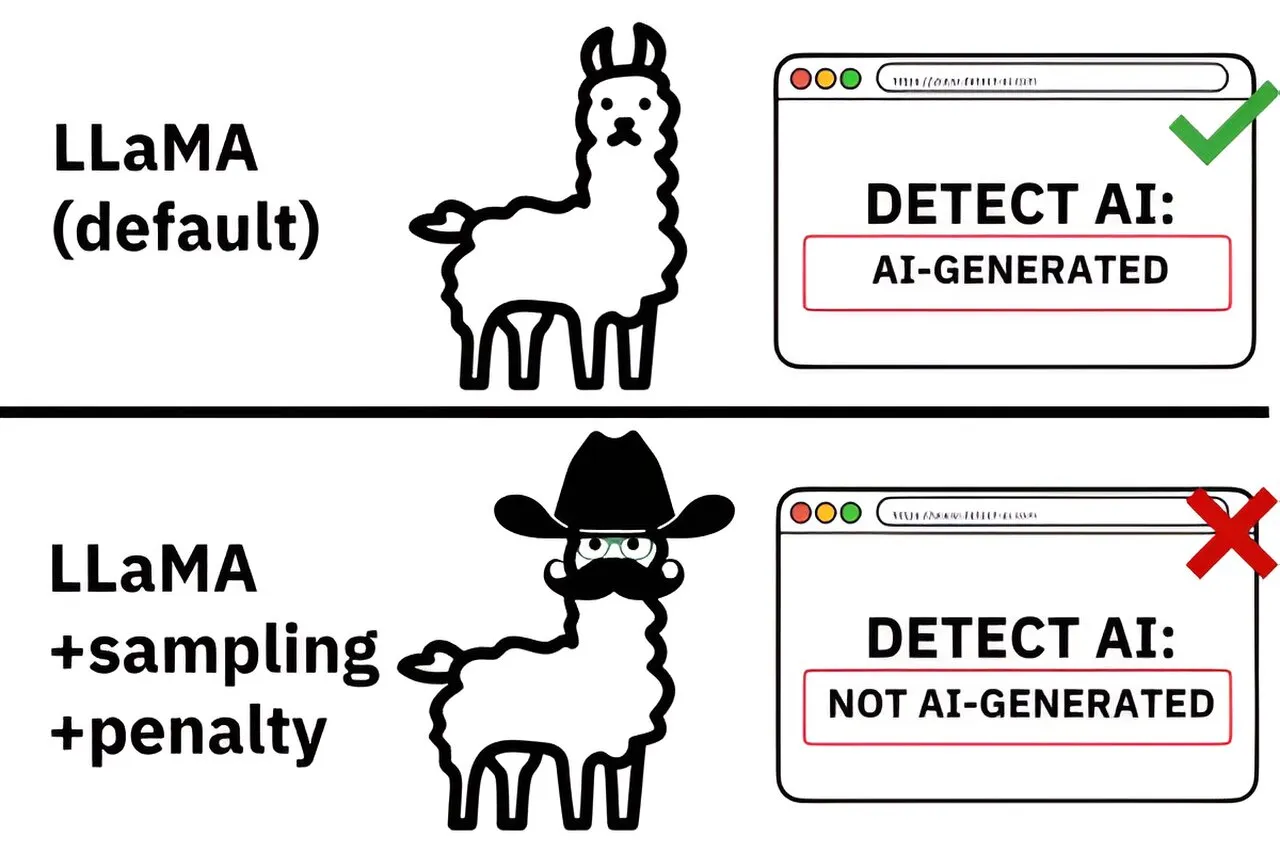

The Arms Race of Technology

- Improvement of LLMs: These tools have become better at mimicking human writing.

- Concerns Over Misinformation: The risk of spreading false information has increased.

- Detection Methods: Ongoing research is focused on developing effective detection techniques.

Conclusion

As technology advances, the battle between human writers and machine-generated content continues. It is vital for both industry professionals and the general public to be aware of these changes and develop robust methods for identifying and preventing the misuse of AI-generated text.

This article was prepared using information from open sources in accordance with the principles of Ethical Policy. The editorial team is not responsible for absolute accuracy, as it relies on data from the sources referenced.