Oracle Launches Pre-Orders for 131,072 Nvidia Blackwell GPUs in Cloud for Generative AI

Expanding Capacity with Nvidia Blackwell GPUs

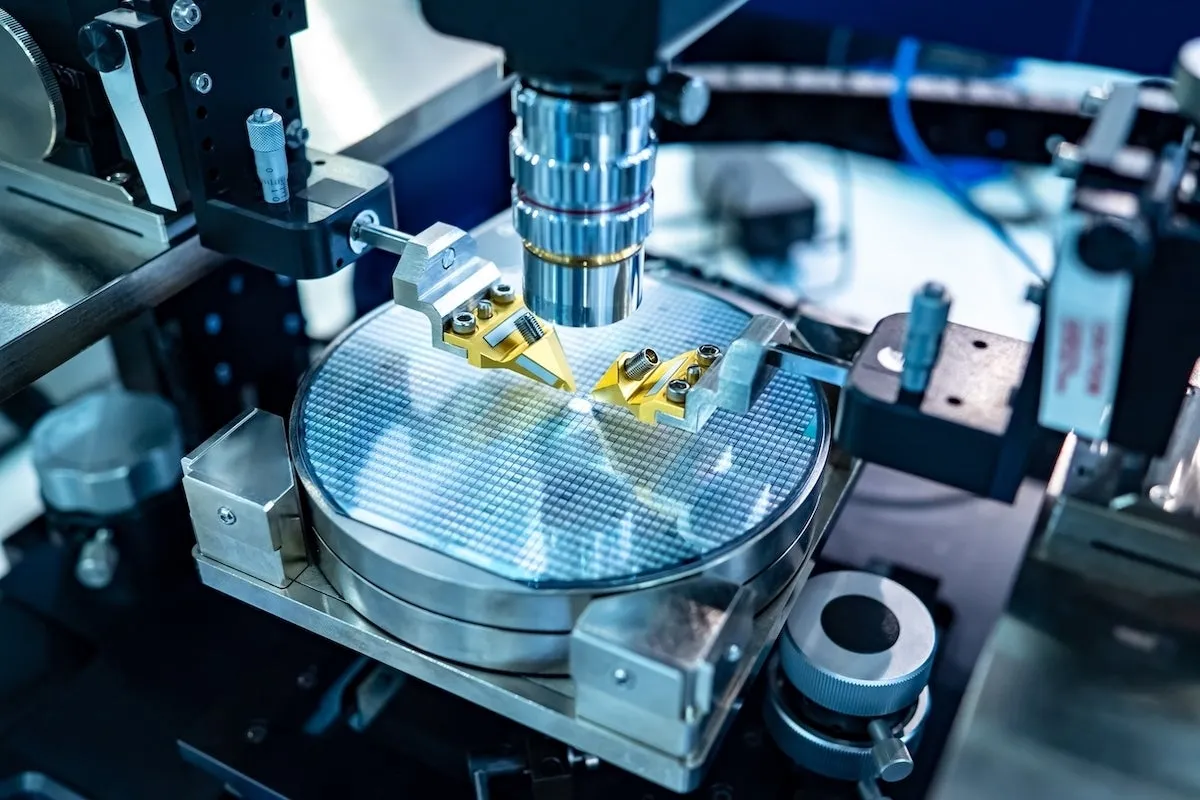

Oracle has taken a bold step by offering 131,072 Nvidia Blackwell GPUs through its Oracle Cloud Infrastructure (OCI) Supercluster to support generative AI applications.

Meeting the Demand for High Performance

The company announced at the CloudWorld 2024 conference that these GPUs, essential for training large language models (LLMs), address significant demand amidst a global shortage of high-bandwidth memory (HBM).

- 18-month wait times for GPUs are reported due to HBM shortages.

- Generative AI is driving demand among firms like AWS, Google, and OpenAI.

Unprecedented Cloud Infrastructure

Oracle's OCI Superclusters with Nvidia GB200 NVL72 liquid-cooled bare-metal instances will facilitate communication among GPUs at an aggregate bandwidth of 129.6 TBps. This high-performance setup is designed to accelerate LLM training significantly.

Competitive Advantage in the Cloud GPU Market

While Oracle's offering is notable, it contends with services from other cloud providers.

- AWS's Project Ceiba provides 20,736 Blackwell GPUs.

- Google Cloud is also preparing to launch a Blackwell GPU service.

Despite the competition, Oracle asserts it will provide more Blackwell GPUs than any other provider, a strategic move in a proliferating market.

This article was prepared using information from open sources in accordance with the principles of Ethical Policy. The editorial team is not responsible for absolute accuracy, as it relies on data from the sources referenced.